Perception and Interaction with Toy-Like objects for Developmental Robots (TOYBOT)

- Orientador: Prof. Alexandre Bernardino

- Co-Orientador: Prof. José Santos Victor

Work framed by the Humanoids Robotics Research Area of the ISR Computer and Robot Vision Lab (Vislab).

Keywords: Computer Vision, Machine Learning, Developmental Robotics.

Introduction

Developmental Robotics is a discipline that studies how robots can learn and develop complex skills by interacting with the world and other agents. Like young infants, the have very basic core abilities at birth but quickly learn how to survive and operate in their environment. This provides a large degree of adaptability to different conditions which allows them to cope with a much wider range of applications compared to classical (pre-programmed) robots.

Objectives

To bootstrap learning and actuation in the environment the robot must have some initial abilities. Babies initiate their developmental skills by interacting with simple shaped, adequately sized and colorful toys, that simplify their perception and actuation. Along time, they learn what are the object features that are relevant to behavior and what actions can be applied on objects (affordances). In this work an initial phase will pre-program some initial perceptual and action skill to interact with toy-like objects. In a second phase, we will develop methods for learning objects features and affordances that will lead to more complex skills.

Description

Perception of objects is a very complex problem in general. By considering objects with simple shapes and saturated colors in a uncluttered environment (the robot's playground) we will facilitate the detection and segmentation of objects, providing the robot with opportunities for interaction. Therefore, in the first part of the work we will develop methods for detecting and estimating the posture of toy-like objects based on the knowledge of their shape and color. Also, we will program some basic motor skill that will allow to the robot to reach and try to grasp the object. In the second phase, exploratory learning methods will attempt to perform actions an objects with different characteristics and learn what are the features of the objects that matter for the interaction and what are the effects of the actions in the robot. This way the robot learns a model of the behavior of the world upon its actions that will allow him to predict, recognize and imitate other agents, eventually leading to social skills.

Prerequisites

Average grade > 14. It is recommended a good knowledge of Signal and/or Image Processing.

Expected Results

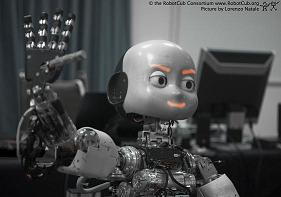

The developed methods will be implemented in the humanoid platform iCub. In the end of the project the robot should be able to detect and estimate the pose of some toy-like objects, reach and try to grasp them.

Object detection and localization will use model based tracking techniques that have important applications in Augmented Reality, Computer Games and Interactive Systems, eventually leading to commercial products.

Related Work

Sample-Based 3D Tracking of Colored Objects: A Flexible Architecture, Matteo Taiana, Jacinto Nascimento, José Gaspar and Alexandre Bernardino, Proc. of the British Machine Vision Conference, BMVC2008, Leeds, UK, 1-4 Sept. 2008. Link: http://www.isr.ist.utl.pt/labs/vislab/publications/08-bmvc-tracking.pdf

Pose Estimation for Grasping Preparation from Stereo Ellipses, Giovanni Saponaro, Alexandre Bernardino, Proc. of the Workshop on Humanoid Robotics at CLAWAR 2008, Coimbra, Portugal, 8-10 Sept. 2008. Link: http://www.isr.ist.utl.pt/labs/vislab/publications/08-clawar-wshop.pdf